The central quantity is variational free energy. You can think of it as a tractable quantity the agent can minimise in place of surprise itself.

Core objective

\[

F[q] = \mathbb{E}_{q(s)}\left[\ln q(s) - \ln p(o, s)\right]

\]

Here, o are observations, s are hidden states, p(o,s) is the generative model, and q(s) is the agent’s approximate posterior belief over states.

In practice, this quantity can be unpacked in a way that is often more intuitive:

Accuracy–complexity form

\[

F \approx \text{complexity} - \text{accuracy}

\]

So a good model explains the data well, but without becoming needlessly complicated.

Minimising free energy therefore does two jobs at once. It improves the fit to sensory data and regularises the beliefs used to explain those data.

This is why active inference is closely tied to variational Bayesian inference.

For action, the relevant quantity is expected free energy, which scores possible future policies.

Expected free energy

\[

G(\pi) = \mathbb{E}_{q(o, s \mid \pi)}

\big[\ln q(s \mid \pi) - \ln p(o, s \mid \pi)\big]

\]

A policy \pi is a candidate course of action. The agent evaluates policies by asking what sorts of outcomes and uncertainties they are expected to generate.

A common and very useful decomposition is that expected free energy captures both:

- risk — whether future outcomes are inconsistent with preferred outcomes

- ambiguity — whether future observations are likely to remain uninformative

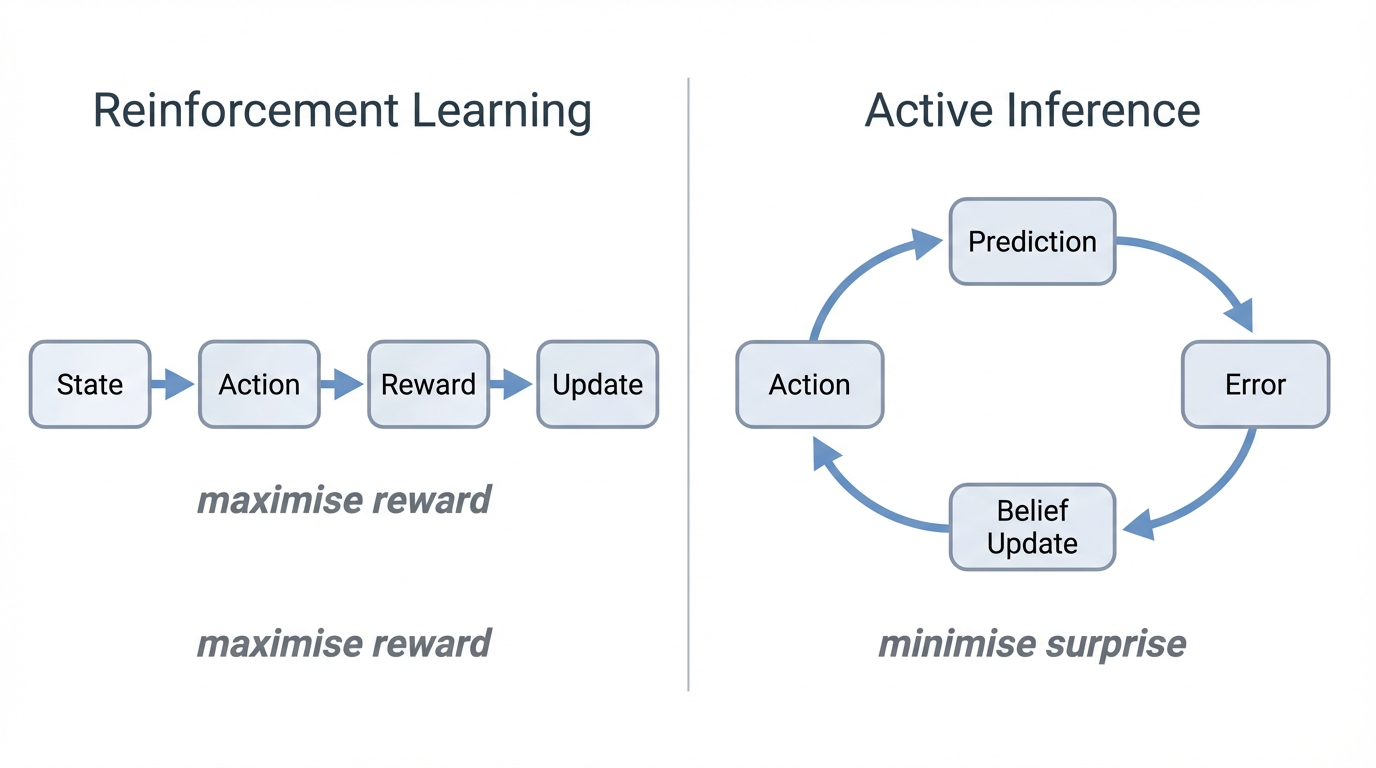

That means active inference naturally balances exploitation and exploration. The agent seeks outcomes it prefers, but it also values information when uncertainty is high.

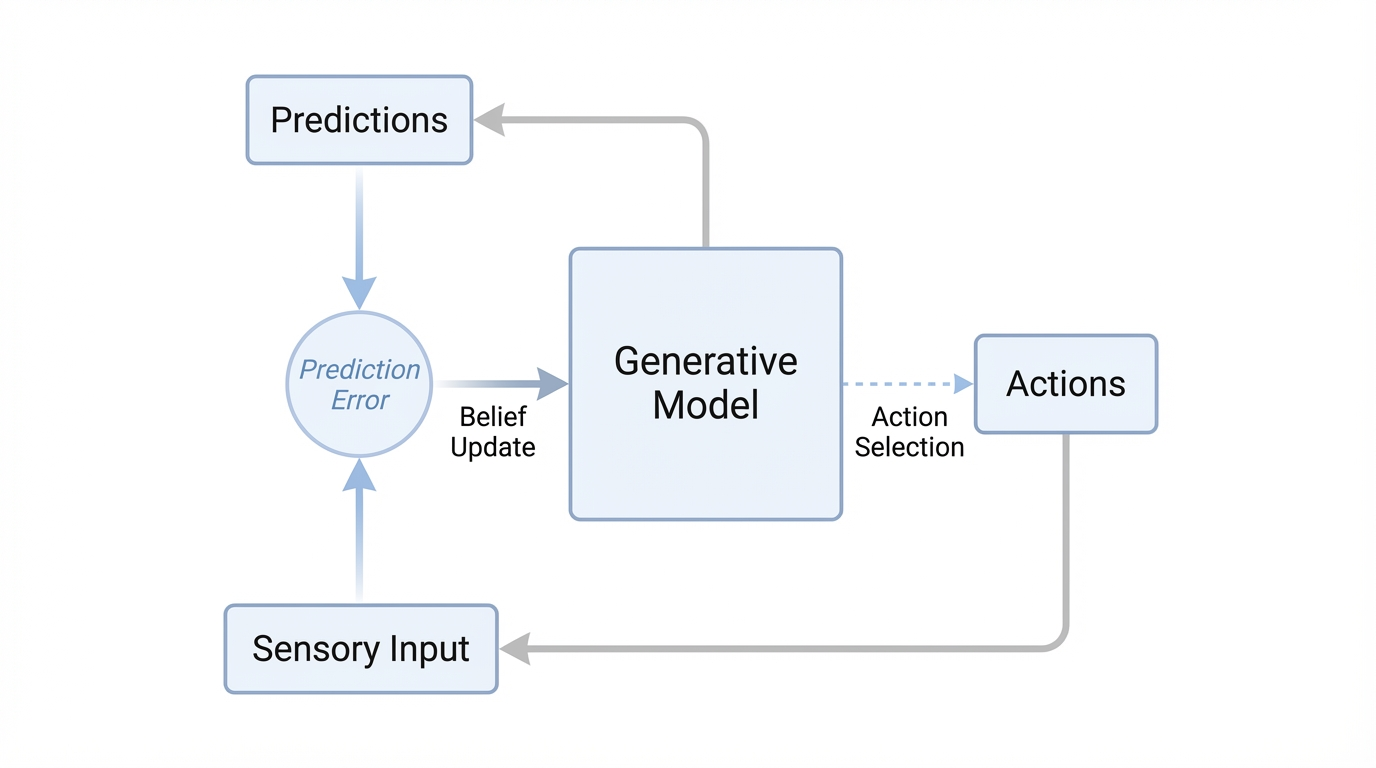

The important intuition: the same formalism that supports perception also supports planning. The agent is not switching between one system for inference and another for decision-making. It is using one probabilistic framework for both.

In continuous-state settings, especially in neuroscience and robotics, one often writes a generative model in state-space form:

State-space form

\[

\dot{x} = f(x, u, \theta) + \omega, \qquad

o = g(x, \theta) + \nu

\]

Hidden states x evolve according to dynamics f, observations o are generated by an observation model g, and the noise terms \omega, \nu capture uncertainty.

That is the bridge to a lot of our work at CPNS: the same formal machinery that can be used to model perception and action can also be used to model neural dynamics,

synaptic mechanisms, EEG or MEG signals, and the effects of drugs or pathology on hidden circuit parameters.